Sunday 02 February 2025

As scientists, we’re constantly seeking ways to improve our understanding of the world and develop new technologies that can aid us in this pursuit. One area where significant progress is being made is in the field of artificial intelligence (AI), particularly when it comes to fine-tuning pre-trained models for specific tasks.

Traditionally, AI models are trained on vast amounts of data to learn general patterns and features. However, when applied to new tasks or domains, these models often require additional training to adapt to the specific requirements of that task. This can be time-consuming and computationally expensive, especially if the model is complex.

Researchers have been exploring ways to accelerate this fine-tuning process by leveraging physical priors – mathematical concepts that describe the underlying structure of the data. By incorporating these priors into a new type of neural network architecture, scientists have developed a method called MoPPA (Multiscale Physics-Informed Patch Attention) that can adapt pre-trained models to specific tasks with remarkable efficiency.

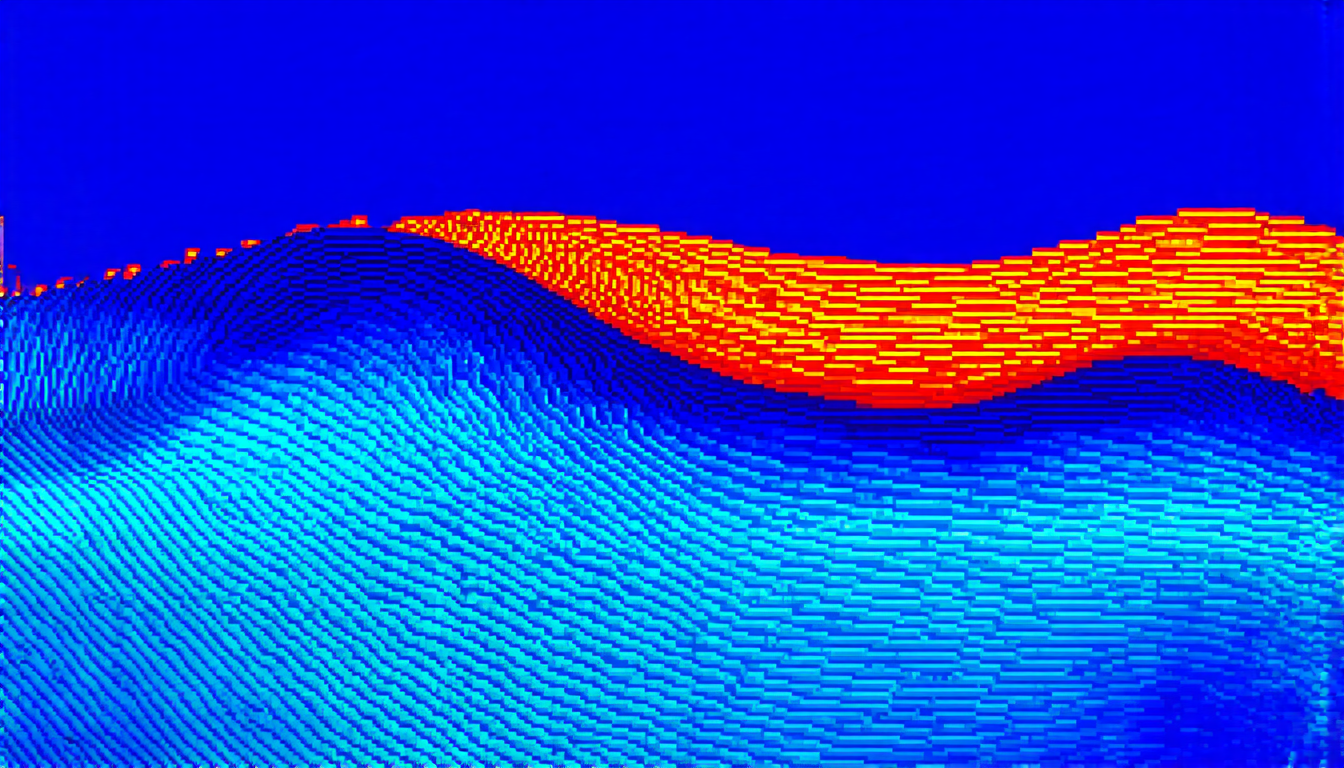

MoPPA’s key innovation lies in its ability to incorporate physical equations from various fields – such as heat transfer, wave propagation, and electrostatics – into the model’s architecture. These equations serve as a kind of ‘prior knowledge’ that guides the adaptation process, allowing the model to learn features that are relevant for the specific task at hand.

The researchers tested MoPPA on a range of tasks, including image classification, object detection, and segmentation. The results were impressive: MoPPA outperformed traditional fine-tuning methods in many cases, requiring significantly fewer parameters and computational resources to achieve similar or better performance.

One of the most striking aspects of MoPPA is its ability to adapt to different physical domains. By incorporating equations from various fields into the model’s architecture, researchers can fine-tune pre-trained models for tasks that require knowledge about specific physical phenomena – such as heat transfer in materials science or wave propagation in acoustics.

MoPPA’s potential applications are vast and varied. For instance, it could be used to develop more accurate predictive models for climate change, optimize the design of complex systems like power grids or transportation networks, or even improve our understanding of biological processes at the molecular level.

While MoPPA is still an evolving technology, its promise is undeniable. By harnessing the power of physical priors and incorporating them into AI models, scientists may be able to unlock new levels of efficiency and accuracy in a wide range of fields.

Cite this article: “Physics-Informed Artificial Intelligence: A New Era of Efficiency and Accuracy”, The Science Archive, 2025.

Artificial Intelligence, Fine-Tuning, Pre-Trained Models, Physical Priors, Multiscale Physics-Informed Patch Attention, Moppa, Neural Network Architecture, Image Classification, Object Detection, Segmentation