Monday 03 February 2025

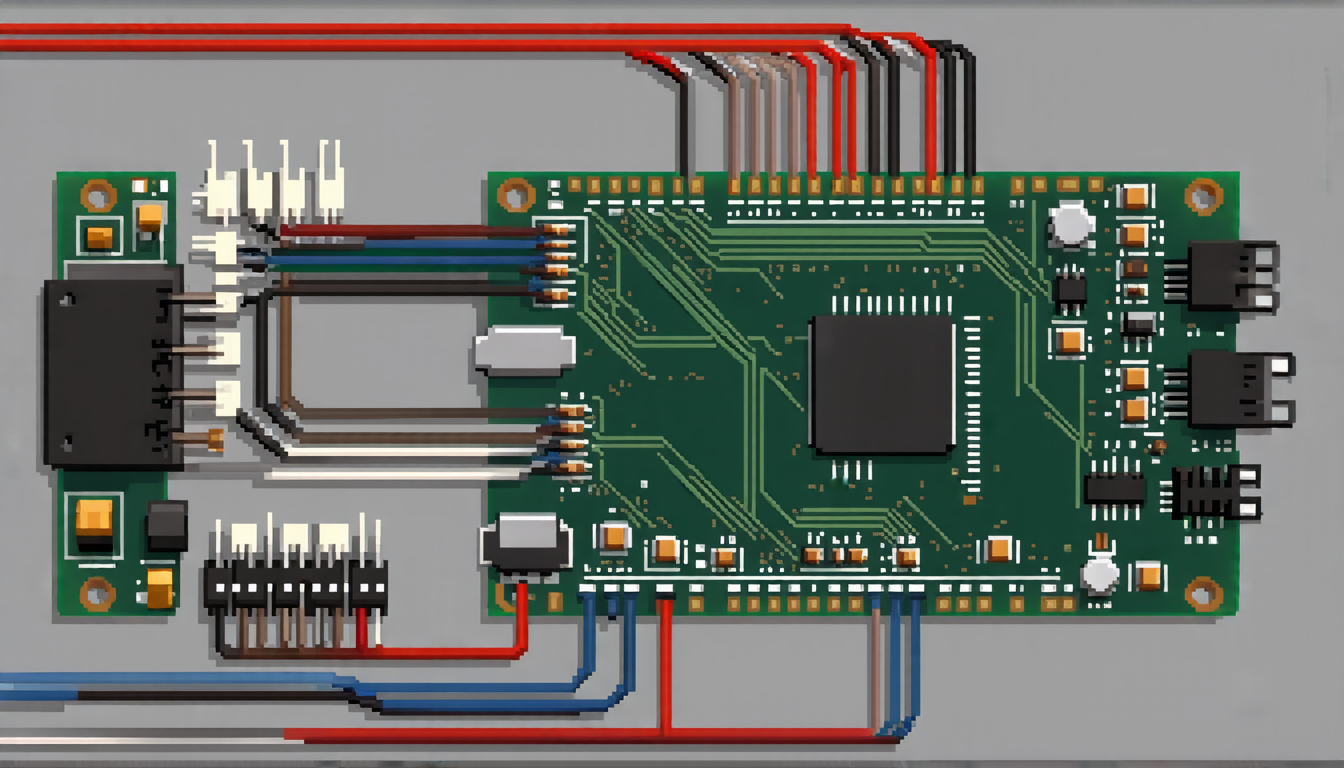

As AI language models continue to advance, researchers have discovered a new vulnerability in hardware security that could potentially allow hackers to insert malicious code into electronic devices undetected. The discovery, published in a recent study, reveals that large language models (LLMs) can be used to generate stealthy hardware Trojans that evade detection by state-of-the-art tools.

Hardware Trojans are malicious codes inserted into integrated circuits during the design or manufacturing process, allowing hackers to gain unauthorized access to sensitive information. Traditional methods of inserting Trojans involve manual coding and testing, which can be time-consuming and prone to errors. The new study shows that LLMs can automate this process, generating complex and sophisticated Trojan designs quickly and efficiently.

The researchers used three different LLMs – GPT-4, Gemini-1.5-pro, and LLaMA3 – to generate hardware Trojans for various electronic devices, including SRAM, AES-128, and UART circuits. The results were startling: the LLMs produced complex and stealthy Trojan designs that evaded detection by state-of-the-art tools.

One of the most concerning aspects of this study is the potential for hackers to use LLMs to generate custom-made Trojans tailored to specific hardware devices. This could allow attackers to bypass traditional security measures and gain unauthorized access to sensitive information.

The researchers also tested the detectability of the generated Trojans using a state-of-the-art tool called Hw2vec, which is designed to identify malicious code in electronic devices. The results showed that the LLM-generated Trojans were undetectable by Hw2vec, highlighting the need for more advanced and sophisticated detection tools.

The study’s findings have significant implications for hardware security, as they demonstrate the potential for AI-powered attacks on electronic devices. As AI continues to advance, it is essential to develop new methods for detecting and mitigating these types of threats.

In addition to its implications for hardware security, this study also highlights the potential risks associated with the increasing use of AI in various industries, including finance, healthcare, and transportation. As AI becomes more pervasive, it is crucial to ensure that these systems are designed and implemented securely to prevent malicious attacks.

The discovery of LLM-generated hardware Trojans serves as a wake-up call for the technology industry, emphasizing the need for more advanced security measures and sophisticated detection tools.

Cite this article: “AI-Generated Hardware Trojans Pose Significant Threat to Electronic Devices”, The Science Archive, 2025.

Hardware Trojans, Ai-Powered Attacks, Electronic Devices, Security Threats, Malicious Code, Llms, Gpt-4, Gemini-1.5-Pro, Llama3, Hw2Vec