Thursday 23 January 2025

A team of researchers has made a significant breakthrough in understanding how statistical models can be used to make predictions and estimate parameters. The study, published recently, sheds light on the behavior of empirical Bayes estimators in linear regression models.

Empirical Bayes estimation is a statistical method that combines data from multiple experiments or observations to make more accurate predictions. In linear regression, this involves using historical data to estimate the relationship between variables and then applying it to new data. The goal is to minimize the error between predicted and actual values.

The researchers found that in certain cases, the empirical Bayes estimator can diverge, meaning it becomes infinite or approaches a specific value. This happens when the condition |ΦTy|2 ≤ |Φ|F is satisfied, where Φ is the design matrix and y is the response variable.

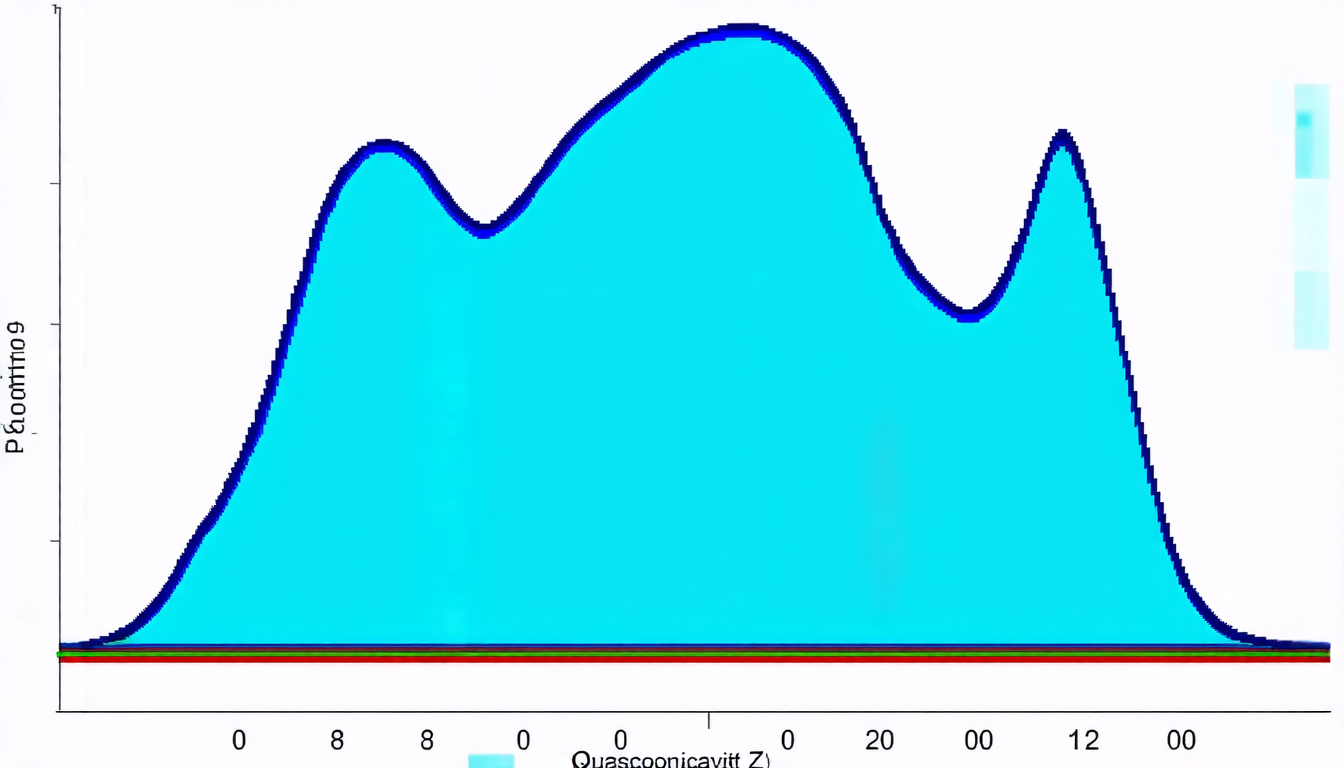

The study also showed that under this condition, the marginal likelihood Z(λ) exhibits quasiconcavity, which means it has a single maximum point. Quasiconcavity is a property of functions that are similar to concave functions but may not be strictly convex.

The researchers demonstrated that this property holds for various linear regression models, including ridge, lasso, and group lasso. These models are commonly used in machine learning and statistics to regularize the coefficients of the linear model and prevent overfitting.

The findings have important implications for statistical modeling and data analysis. They suggest that empirical Bayes estimation can be used to make more accurate predictions and estimate parameters with greater precision.

In practical terms, this means that researchers and analysts can use these methods to improve their predictive models and gain a better understanding of complex systems. The study’s results also highlight the importance of considering the properties of marginal likelihoods in statistical modeling.

Overall, the research provides valuable insights into the behavior of empirical Bayes estimators and has significant implications for the field of statistics and machine learning.

Cite this article: “Behavioral Insights into Empirical Bayes Estimation in Linear Regression Models”, The Science Archive, 2025.

Statistical Models, Empirical Bayes Estimation, Linear Regression, Predictions, Parameters, Estimation, Error Minimization, Quasiconcavity, Marginal Likelihood, Statistical Modeling