Thursday 22 May 2025

In a breakthrough that could revolutionize the way robots interact with their environment, researchers have developed a new system that allows machines to learn complex assembly tasks using nothing but visual feedback.

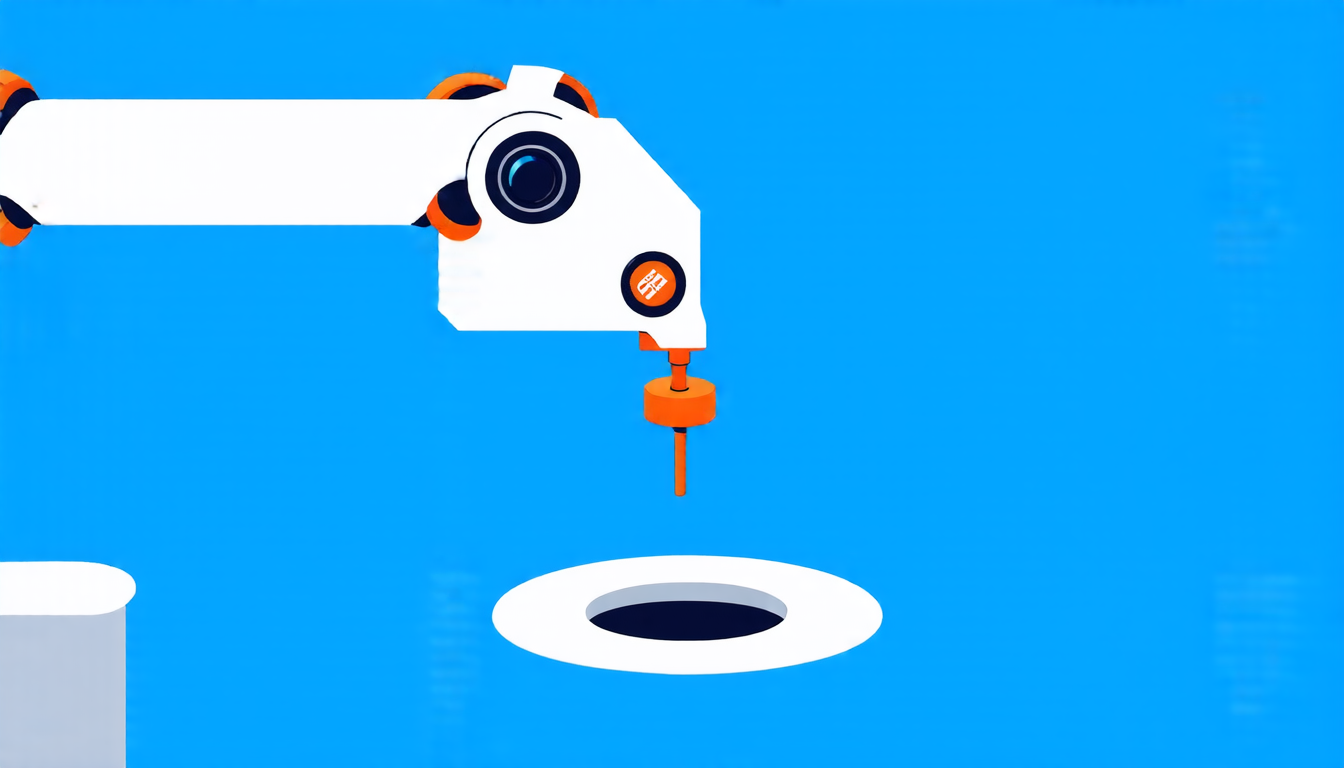

The system, known as Separate Primitive Policy (S2P), uses a combination of machine learning algorithms and computer vision techniques to enable robots to perform delicate tasks such as inserting pegs into holes. By observing the visual cues provided by its environment, an S2P-equipped robot can learn to manipulate objects with incredible precision and accuracy.

One of the key challenges facing robotics researchers is the development of systems that can adapt to new situations and environments without requiring extensive reprogramming or recalibration. Traditional approaches often rely on complex sensors and algorithms that are difficult to implement and maintain, making them impractical for widespread use.

S2P addresses this challenge by decoupling the learning process into two distinct phases: location and insertion. The robot uses computer vision to identify the target object (in this case, a peg) and then learns to move its end effector to precisely position itself above the hole. Once in position, the robot can use its learned insertion policy to successfully insert the peg.

This approach has several advantages over traditional methods. For one, it allows robots to learn from visual feedback alone, eliminating the need for expensive sensors or complex algorithms. Additionally, S2P’s modular design makes it easy to adapt to new tasks and environments by simply updating the relevant policies.

To test the capabilities of S2P, researchers trained a robot to perform a series of challenging assembly tasks involving different shapes and sizes of pegs and holes. The results were impressive: the robot was able to successfully complete each task with high accuracy and precision, even in the presence of noise and distractions.

The potential applications of S2P are vast and varied. In manufacturing settings, robots equipped with S2P could be used to assemble complex products with unprecedented speed and accuracy. In healthcare settings, S2P-equipped robots could assist surgeons during delicate procedures or aid in the development of new medical devices.

As researchers continue to refine and expand upon the capabilities of S2P, it’s clear that this technology has the potential to transform the way we interact with machines and the world around us. By enabling robots to learn from visual feedback alone, S2P is poised to make a significant impact on industries ranging from manufacturing to healthcare and beyond.

Cite this article: “Robots Learn Complex Tasks Through Visual Feedback Alone”, The Science Archive, 2025.

Robots, Machine Learning, Computer Vision, Visual Feedback, Assembly Tasks, Pegs, Holes, Manufacturing, Healthcare, Automation