Thursday 23 January 2025

The quest for privacy in machine learning has led researchers to develop novel techniques that balance accuracy and confidentiality. One such approach is called differentially private Federated Averaging (DP-FA), which enables multiple clients to jointly train a shared model while preserving individual data privacy.

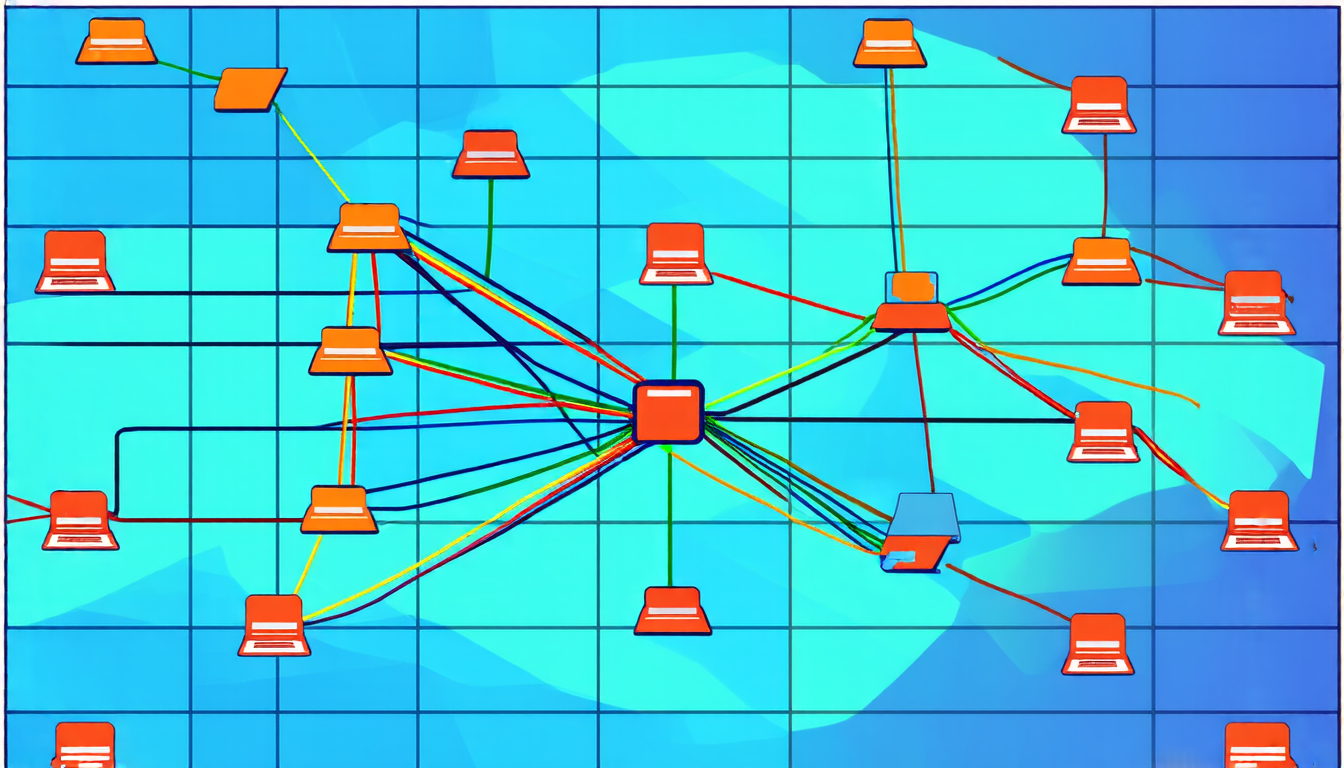

In this innovative method, each client generates stochastic gradients of their local objective function and shares them with a central server. The server then aggregates these gradients using a weighted average, where the weights are proportional to the number of samples at each client. This process is repeated multiple times, allowing the clients to learn from each other’s data while maintaining confidentiality.

However, DP-FA faces a significant challenge: the need for robustness against quantization errors introduced during communication between clients and the server. These errors can arise from the finite precision of floating-point numbers used in computations. To address this issue, researchers have developed an innovative technique called Lattice-based Randomized Quantization (LRSUQ).

LRSUQ works by mapping the quantization error to a lattice structure, which is then perturbed using a random noise vector. This approach provides several benefits, including improved robustness against errors and reduced computational complexity.

In addition to these technical advancements, the researchers have also demonstrated the efficacy of DP-FA with LRSUQ through extensive simulations. They showed that their method can achieve high levels of accuracy while maintaining strong privacy guarantees, even in scenarios where clients have heterogeneous data distributions.

The implications of this research are far-reaching, as they enable the development of more secure and private machine learning systems. This could lead to a wide range of applications, from medical diagnosis and personalized recommendations to autonomous vehicles and financial analysis.

In summary, DP-FA with LRSUQ represents a significant step forward in the quest for privacy in machine learning. By combining robust quantization techniques with differentially private aggregation methods, researchers have created a powerful tool that can be used to develop more secure and accurate AI systems.

Cite this article: “Private Federated Learning: A Breakthrough in Secure Machine Intelligence”, The Science Archive, 2025.

Machine Learning, Privacy, Differential Privacy, Federated Averaging, Quantization Errors, Lattice-Based Randomized Quantization, Robustness, Accuracy, Confidentiality, Security.