Thursday 23 January 2025

The quest for efficient data compression has been an ongoing endeavor in the field of computer science and information theory. In recent years, researchers have made significant strides in understanding the fundamental limits of compressing complex data structures like graphs and networks. A new study published in a prestigious journal sheds light on the rate-distortion-perception tradeoff for graph sources, providing valuable insights into how to optimize compression algorithms.

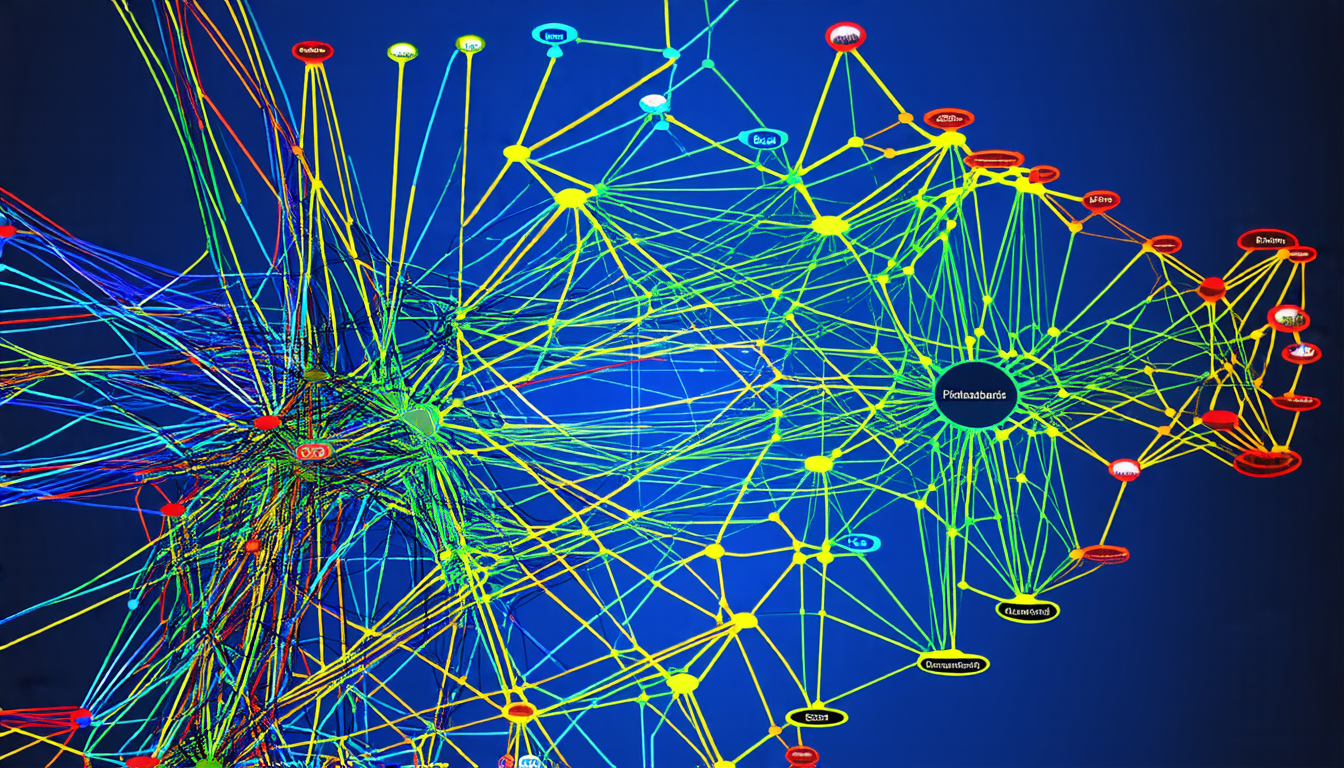

To put it simply, compression is all about reducing the size of large datasets while preserving their essential features. The problem becomes more challenging when dealing with complex structures like graphs, which are used to represent relationships between objects or entities in various fields such as social networks, biological systems, and transportation networks. In these cases, compressing the graph data requires not only minimizing its size but also maintaining the accuracy of the underlying structure.

The researchers tackled this problem by introducing a new framework that combines rate distortion theory with perception measures to optimize compression algorithms for graph sources. The key insight is that the optimal tradeoff between compression rate and distortion depends on the specific properties of the graph, such as its degree distribution or motif distribution. By understanding these properties, it becomes possible to design more effective compression schemes that balance the need for compactness against the requirement for preserving the integrity of the graph structure.

One of the most significant contributions of the study is the development of a rate-distortion-perception function that captures the fundamental limits of compressing graph data. This function provides a mathematical framework for optimizing compression algorithms, allowing researchers to identify the optimal balance between compression rate and distortion for a given graph source.

The findings have far-reaching implications for various applications, including data storage and transmission in computer networks, biological sequence analysis, and social network analysis. By developing more efficient compression schemes that take into account the unique properties of graph data, researchers can reduce the amount of data required to store or transmit large graphs, making it easier to manage and analyze complex systems.

The study also highlights the importance of considering perception measures in addition to traditional distortion metrics. In many cases, preserving the accuracy of the graph structure is more critical than minimizing its size, especially when dealing with critical applications such as network reliability or biological sequence analysis. By incorporating perception measures into compression algorithms, researchers can ensure that the compressed data remains faithful to the original graph structure.

In summary, the study provides a significant advance in our understanding of the rate-distortion-perception tradeoff for graph sources, offering valuable insights into how to optimize compression algorithms for complex data structures.

Cite this article: “Optimizing Compression Algorithms for Graph Sources”, The Science Archive, 2025.

Graph Compression, Rate-Distortion-Perception Tradeoff, Data Storage, Network Transmission, Biological Sequence Analysis, Social Network Analysis, Computer Science, Information Theory, Graph Data Structures, Compression Algorithms