Friday 31 January 2025

Scientists have made a significant breakthrough in the field of artificial intelligence, developing a new method for training energy-based models that can learn complex patterns in data. The approach, known as energy discrepancy, uses a novel technique to train these models, which are capable of generating high-quality synthetic data.

The researchers began by exploring the limitations of traditional methods for training energy-based models. These models typically use contrastive divergence, a technique that involves iteratively updating the parameters of the model using a combination of positive and negative samples. However, this approach can be slow and computationally expensive, especially when dealing with large datasets.

To address these challenges, the team developed a new method called energy discrepancy. This approach uses a different type of perturbation to update the parameters of the model, one that is more efficient and effective than traditional methods. The key innovation lies in the way the perturbations are generated, which allows the model to learn more complex patterns in the data.

The researchers tested their new method on a range of datasets, including images, text, and graph data. They found that energy discrepancy outperformed traditional methods in terms of both quality and efficiency. In particular, they were able to generate high-quality synthetic data that closely matched the characteristics of the original dataset.

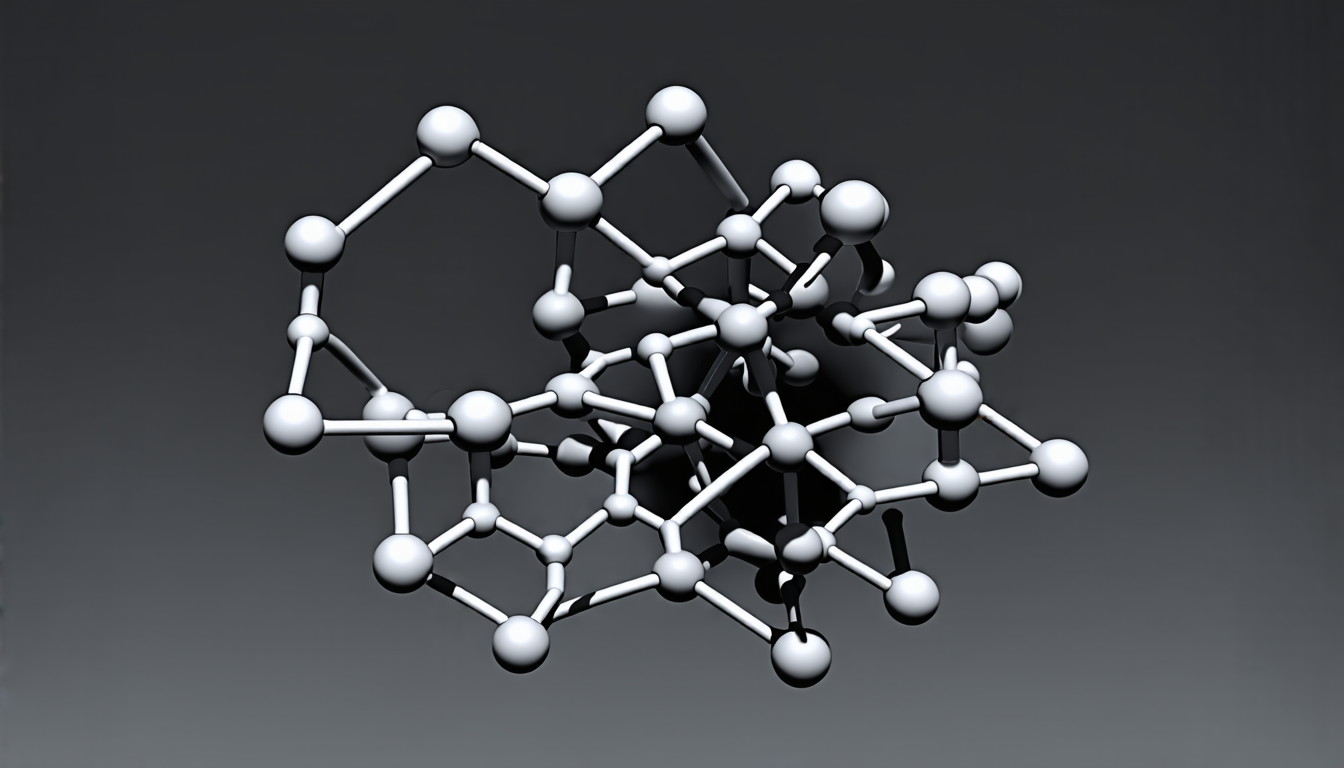

One of the most impressive applications of energy discrepancy is in generating realistic graphs. Graphs are complex structures that consist of nodes and edges, and are used to model a wide range of real-world phenomena, from social networks to molecular structures. The ability to generate high-quality synthetic graphs could have significant implications for fields such as data science and machine learning.

The researchers believe that energy discrepancy has the potential to revolutionize the field of artificial intelligence. By allowing models to learn more complex patterns in data, it could enable a new generation of AI systems that are capable of tackling challenging problems in areas such as image recognition, natural language processing, and decision-making.

In addition to its applications in AI, energy discrepancy also has implications for other fields, such as computer vision, robotics, and biomedicine. For example, the ability to generate realistic images could be used to create more accurate training data for autonomous vehicles or medical imaging systems.

Overall, the development of energy discrepancy is a significant milestone in the field of artificial intelligence. It represents a new approach to training energy-based models that has the potential to enable a wide range of applications and improve our understanding of complex phenomena.

Cite this article: “Scientists Develop New AI Method for Training Energy-Based Models”, The Science Archive, 2025.

Artificial Intelligence, Energy-Based Models, Data Patterns, Training Methods, Contrastive Divergence, Perturbations, Graph Data, Synthetic Data, Machine Learning, Ai Systems.