Saturday 01 February 2025

The intricate dance of machine learning algorithms and their biases has long been a topic of interest in the scientific community. Recently, researchers have delved deeper into the world of Federated Averaging (FedAvg), a popular algorithm for distributed machine learning. In a new study, scientists have shed light on the bias inherent in FedAvg and its impact on the accuracy of trained models.

To understand this phenomenon, it’s essential to grasp the fundamental concept of bias in machine learning. In essence, bias refers to the systematic error that occurs when an algorithm is not perfectly fair or objective. This can manifest in various ways, such as favoring certain classes over others or producing results that are skewed towards a particular demographic.

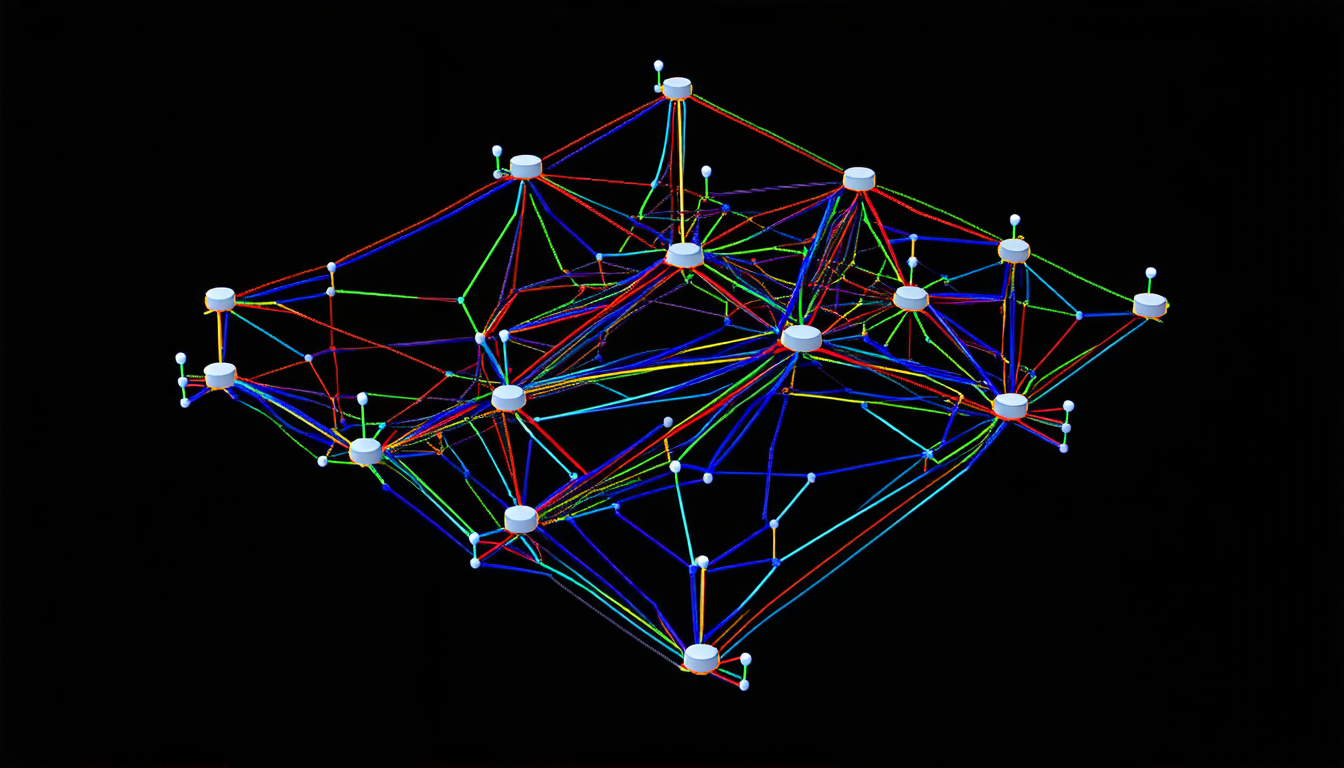

FedAvg, developed by researchers at Google, is a widely used algorithm for training machine learning models on decentralized data. It works by aggregating model updates from multiple devices or nodes, allowing for the distribution of computation and data storage. While FedAvg has shown impressive results in various applications, its bias has been largely overlooked until now.

The study reveals that FedAvg’s bias is rooted in two primary sources: heterogeneity and stochasticity. Heterogeneity arises from the fact that each node may have a different local model, which can lead to inconsistent updates. Stochasticity, on the other hand, stems from the random sampling of gradients during the training process.

The researchers demonstrate that FedAvg’s bias can be quantified using a mathematical framework, which allows them to analyze its impact on the accuracy of trained models. They show that the algorithm’s bias is proportional to the number of nodes and the level of heterogeneity in the data. Furthermore, they find that stochasticity plays a significant role in amplifying this bias.

The study also provides insights into the behavior of FedAvg under different scenarios. For instance, when the number of nodes increases, the algorithm’s bias grows more pronounced. Similarly, when the data is highly heterogeneous, FedAvg’s bias becomes more severe.

These findings have significant implications for the development and deployment of machine learning models in real-world applications. By acknowledging and understanding the bias inherent in FedAvg, researchers can take steps to mitigate its effects and improve the accuracy of their models.

In practical terms, this means that developers should consider implementing techniques such as data normalization or regularization to reduce heterogeneity and stochasticity. Additionally, they may want to explore alternative algorithms that are more robust to these biases.

Cite this article: “Uncovering the Bias in Federated Averaging: A Study on its Impact on Machine Learning Models”, The Science Archive, 2025.

Machine Learning, Bias, Fedavg, Federated Averaging, Algorithm, Distributed Machine Learning, Decentralized Data, Heterogeneity, Stochasticity, Model Updates.