Saturday 01 February 2025

Deep learning has revolutionized many fields, but one of its most promising applications is in optimizing black-box functions. These are mathematical formulas that describe complex systems or processes, and finding their optimal values can be crucial for making accurate predictions or informed decisions.

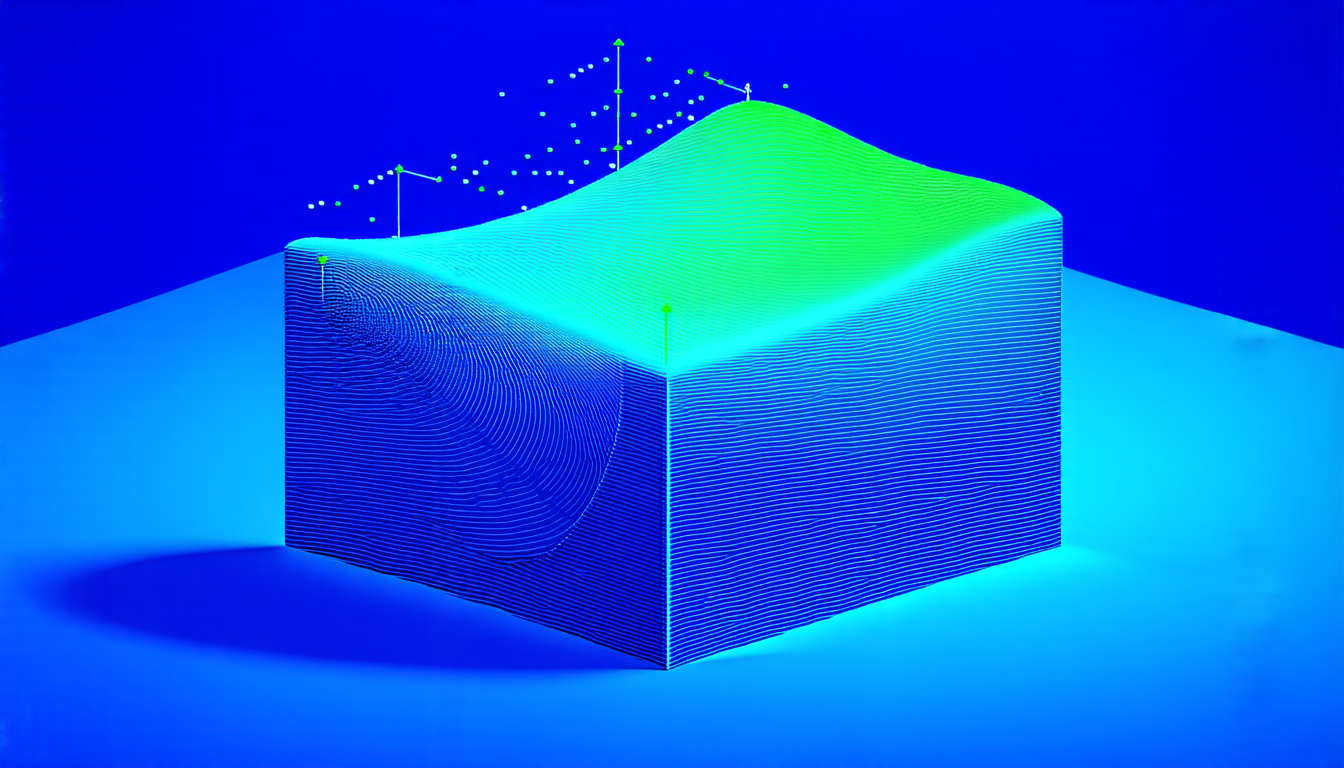

However, traditional methods for optimizing these functions often struggle with noisy data, limited samples, and high-dimensional spaces. This is where a new approach called Deep Gradient Interpolation (DGI) comes in. DGI uses neural networks to estimate the gradients of black-box functions, which are essential for optimization algorithms.

The researchers behind DGI developed several variations of the method, each designed to tackle specific challenges. One key innovation is the use of interpolation paths to connect different points in the input space. These paths are sampled randomly and used to estimate the gradient at a given point.

Another important aspect of DGI is its ability to balance two competing goals: estimation accuracy and path independence. Estimation accuracy refers to how well the method can approximate the true gradient, while path independence means that the estimated gradient should not depend on the specific interpolation paths chosen.

The researchers tested DGI on a range of simulation problems, including linear and quadratic functions, as well as more complex functions like those used in engineering design optimization. They also applied it to real-world datasets, such as those from the SimOpt testbed.

The results were impressive: DGI outperformed traditional methods like ETD (Expected Tail Distribution) in many cases, especially when dealing with noisy data or limited samples. The method was also robust across different noise levels and able to adapt to changing conditions.

In addition to its performance, DGI has some practical advantages. It is relatively simple to implement and can be easily parallelized, making it well-suited for large-scale optimization problems.

The researchers believe that DGI has the potential to revolutionize the field of black-box optimization, enabling more accurate and efficient solutions to complex problems. They are already exploring new applications for the method, including optimization of machine learning models and control systems.

In short, Deep Gradient Interpolation is a powerful new approach to optimizing black-box functions. Its ability to adapt to noisy data, limited samples, and high-dimensional spaces makes it an attractive solution for many real-world problems.

Cite this article: “Deep Gradient Interpolation: A New Approach to Optimizing Black-Box Functions”, The Science Archive, 2025.

Black-Box Optimization, Deep Learning, Neural Networks, Gradient Interpolation, Estimation Accuracy, Path Independence, Simulation Problems, Real-World Datasets, Noisy Data, Limited Samples.