Saturday 01 February 2025

A new approach has been developed to improve the performance of artificial intelligence (AI) agents in complex environments, such as video games and simulations. The method, called Policy Gradient Tuning (PGT), uses a combination of machine learning algorithms and human feedback to fine-tune the agent’s policy, or set of actions, to achieve specific goals.

In traditional AI systems, the policy is typically learned through trial and error, with the agent exploring the environment and adjusting its behavior based on the outcomes. However, this approach can be slow and inefficient, especially in complex environments where the optimal policy may not be easily discovered.

PGT addresses this challenge by using a technique called preference learning, which allows humans to provide feedback on the agent’s performance in the form of preferences between different trajectories or paths through the environment. This feedback is then used to adjust the agent’s policy to better align with human goals and objectives.

The researchers tested PGT in several environments, including Minecraft, a popular sandbox game that requires players to gather resources, build structures, and fend off monsters. They found that the agent learned to perform tasks such as collecting wood, crafting tools, and exploring mines more efficiently and effectively than previous approaches.

One of the key advantages of PGT is its ability to learn from human feedback in real-time, allowing the agent to adapt quickly to changing goals and objectives. This makes it particularly useful for applications where the environment is dynamic or uncertain, such as search and rescue missions or autonomous vehicles.

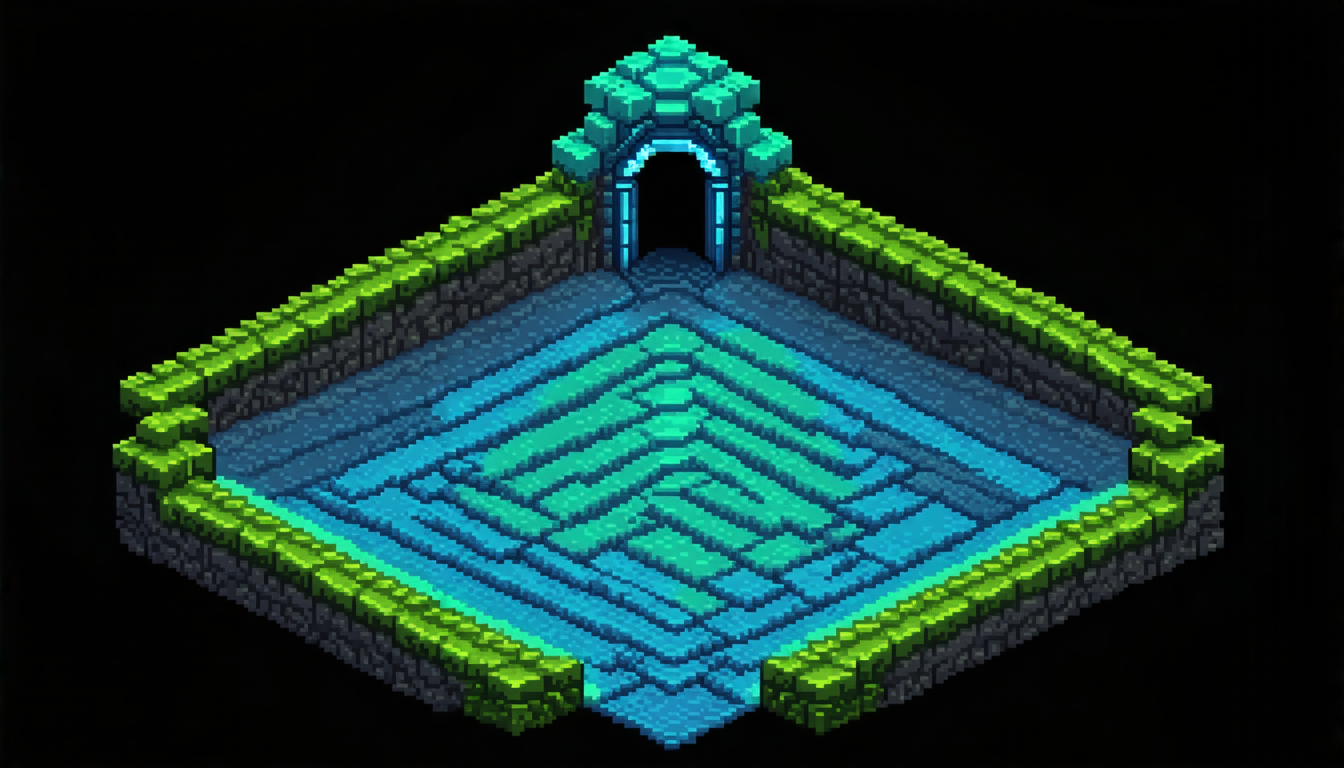

The researchers also tested PGT on a range of other tasks, including navigating through mazes, solving puzzles, and playing games like chess. They found that the agent was able to learn and adapt quickly in all of these domains, demonstrating its versatility and potential for real-world applications.

Overall, the results suggest that PGT has the potential to revolutionize the field of AI by providing a more efficient and effective way to train agents to perform complex tasks. By leveraging human feedback and preference learning, PGT could enable AI systems to learn from humans in real-time, adapt quickly to changing environments, and achieve superior performance in a wide range of applications.

Cite this article: “Policy Gradient Tuning: A New Approach to Improving Artificial Intelligence Performance”, The Science Archive, 2025.

Artificial Intelligence, Policy Gradient Tuning, Machine Learning, Human Feedback, Preference Learning, Complex Environments, Video Games, Simulations, Real-Time Adaptation, Dynamic Environments