Sunday 02 February 2025

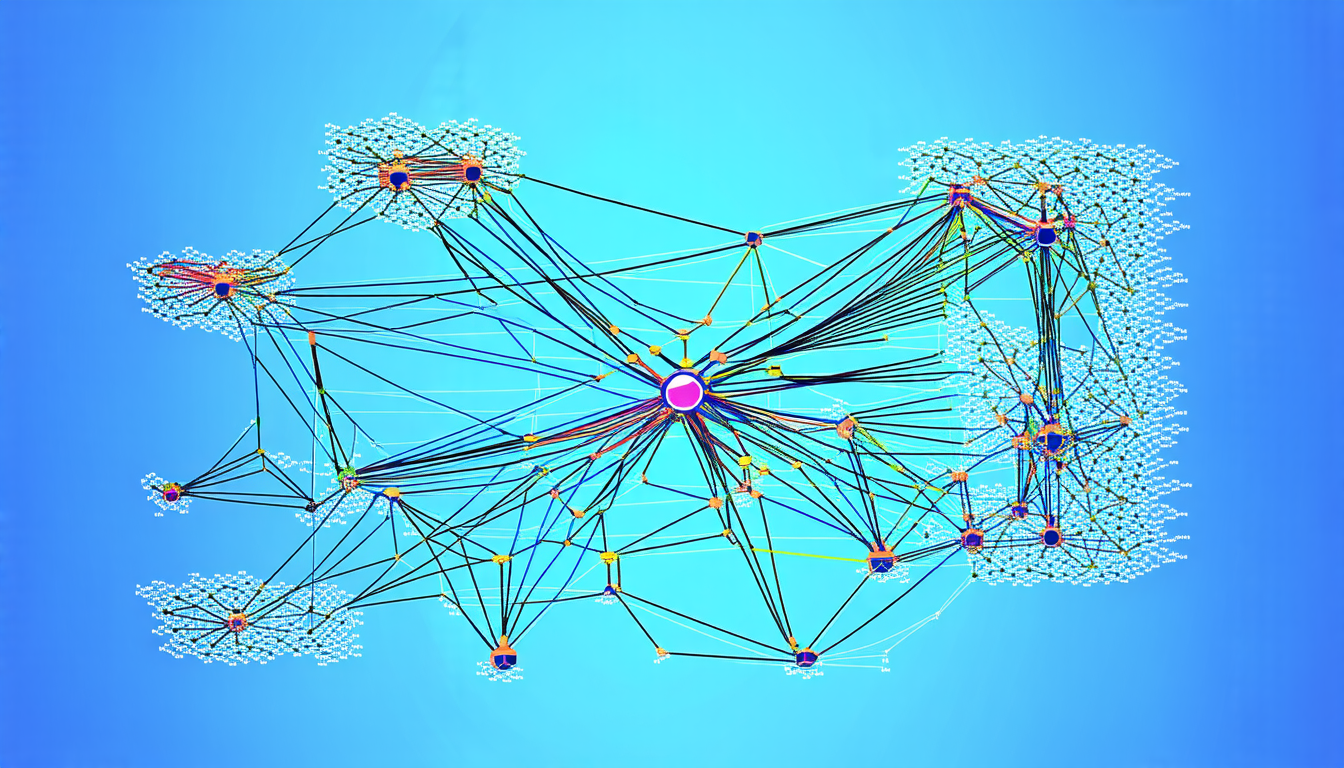

Cybersecurity agents are increasingly being used to defend complex networks against cyber-attacks, but these agents can be vulnerable to uncertainties in their runtime environment. These uncertainties arise either due to insufficient knowledge about the system’s dynamics at training time or changes in adversarial behavior that remain unknown to the system.

To address this issue, researchers have developed a new approach that uses a combination of machine learning and symbolic reasoning to detect out-of-distribution situations in autonomous cyber-defense agents. These agents are trained using reinforcement learning policies, but they can struggle with detecting changes in their environment or adapting to new situations.

The new approach, called the Option-Critic architecture, uses a behavior tree framework to represent complex decision-making processes. The behavior tree is composed of nodes that represent different actions and conditions, and the system evaluates these nodes based on a set of rules and heuristics.

In addition, the researchers have developed an out-of-distribution detection algorithm that can detect changes in the environment or the agent’s own behavior. This algorithm uses a probabilistic neural network to predict the likelihood of a given state or action being part of the normal operating range of the system.

The researchers tested their approach on a simulated cyber-attack scenario, using the CybORG gym to evaluate the performance of the autonomous cyber-defense agents. The results showed that the agents were able to adapt to changes in the environment and detect out-of-distribution situations, even when the adversarial behavior was unknown.

This new approach has significant implications for the development of trustworthy autonomous cyber-defense agents. By combining machine learning with symbolic reasoning, these agents can better adapt to changing environments and detect potential threats more effectively. This could lead to improved cybersecurity in complex networks and a reduction in the risk of cyber-attacks.

The researchers believe that their approach can be applied to other areas of artificial intelligence, such as robotics and autonomous vehicles. By using behavior trees and probabilistic neural networks, these systems could better adapt to changing environments and detect potential threats more effectively.

Overall, this new approach has the potential to revolutionize the field of cybersecurity by providing more effective and adaptable autonomous cyber-defense agents. By combining machine learning with symbolic reasoning, these agents can better detect out-of-distribution situations and adapt to changing environments, leading to improved cybersecurity in complex networks.

Cite this article: “Adapting Autonomous Cyber-Defense Agents to Uncertainty”, The Science Archive, 2025.

Cybersecurity, Autonomous Agents, Machine Learning, Symbolic Reasoning, Out-Of-Distribution Detection, Behavior Trees, Probabilistic Neural Networks, Reinforcement Learning, Cyber-Attacks, Artificial Intelligence.