Sunday 02 February 2025

Deep learning algorithms have revolutionized many fields, including medicine. They can analyze medical images and diagnose diseases with unprecedented accuracy. However, these algorithms are notoriously difficult to understand, even for experts. This lack of transparency is a major concern in healthcare, where decisions made by AI systems can have serious consequences.

To address this issue, researchers have developed various techniques to explain how deep learning models make their predictions. These explanations aim to provide insight into the decision-making process and help clinicians understand why the model reached its conclusion. However, it’s not clear which of these techniques is most effective or whether they are sufficient on their own.

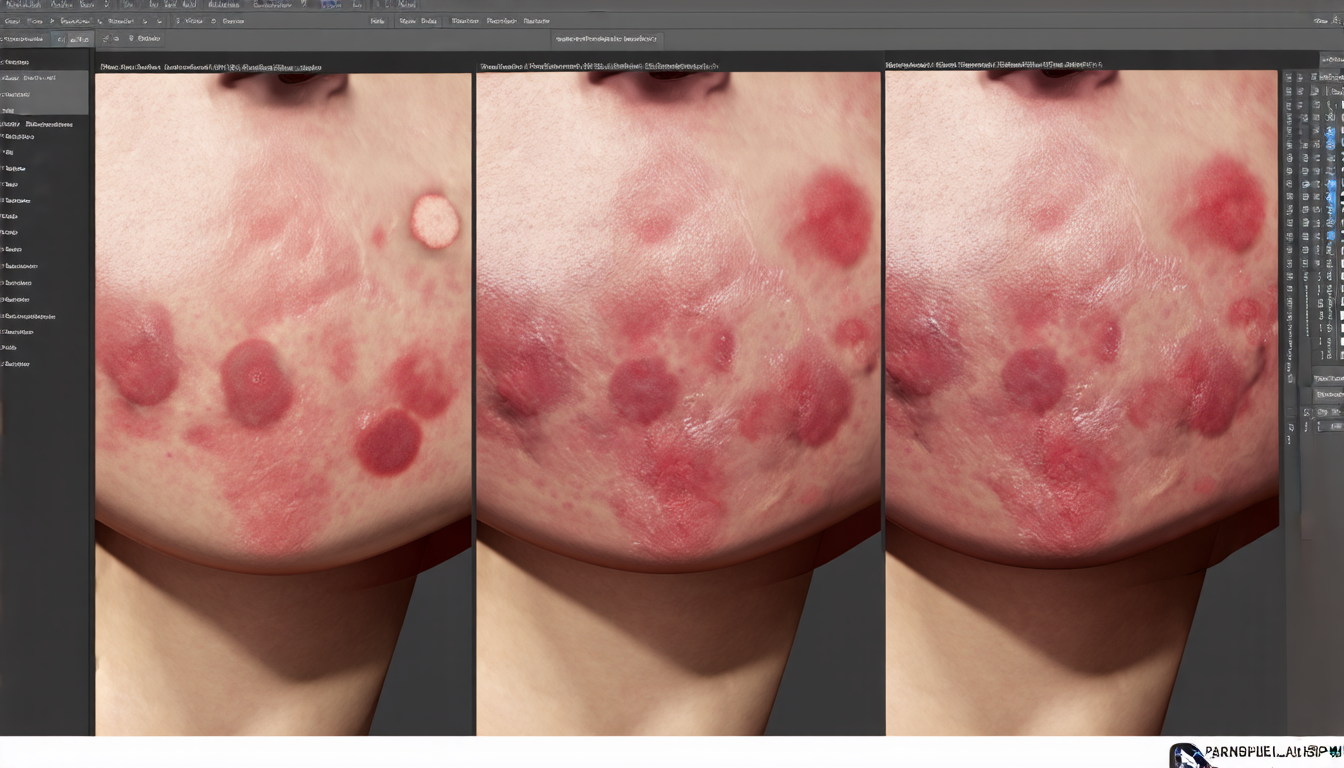

A recent study compared seven different methods for explaining deep learning models in skin cancer diagnosis. The researchers trained a convolutional neural network (CNN) to classify images of skin lesions as either benign or malignant. They then used various techniques to explain the model’s predictions, including pixel attribution methods and concept-based explanations.

Pixel attribution methods highlight the most important regions within an image that contributed to the model’s prediction. This can help clinicians understand why the model reached its conclusion by identifying specific features in the image. However, these methods may not provide a complete understanding of the decision-making process, as they focus solely on individual pixels rather than larger patterns or concepts.

Concept-based explanations, on the other hand, identify higher-level concepts that contributed to the model’s prediction. These concepts can be related to specific medical features, such as pigmentation or vascular structures. This approach provides more context and insights into the decision-making process, but it may require domain knowledge to interpret the results correctly.

The study found that each method had its strengths and limitations. Pixel attribution methods were effective in identifying biases and spurious correlations within the data, while concept-based explanations provided a higher-level understanding of the decision-making process. However, none of the techniques alone was sufficient to provide a complete explanation of the model’s predictions.

This study highlights the importance of combining multiple explanation techniques to achieve transparency in AI systems. By using a combination of pixel attribution and concept-based explanations, clinicians can gain a deeper understanding of how the model reached its conclusion and make more informed decisions about patient care.

In the future, researchers will need to develop even more sophisticated explanation techniques that can provide a complete and accurate picture of the decision-making process. This will require collaboration between experts in AI, medicine, and psychology to create explanations that are both technically sound and clinically relevant.

Cite this article: “Unlocking Transparency in Deep Learning Models for Medical Diagnosis”, The Science Archive, 2025.

Deep Learning, Medical Imaging, Skin Cancer Diagnosis, Convolutional Neural Networks, Pixel Attribution, Concept-Based Explanations, Transparency, Ai Systems, Decision-Making Process, Machine Learning.