Saturday 01 March 2025

Optimization is a crucial process in many fields, from finance to engineering, where the goal is to find the best possible solution among a vast array of options. However, when it comes to sumscale problems, optimization can be a daunting task.

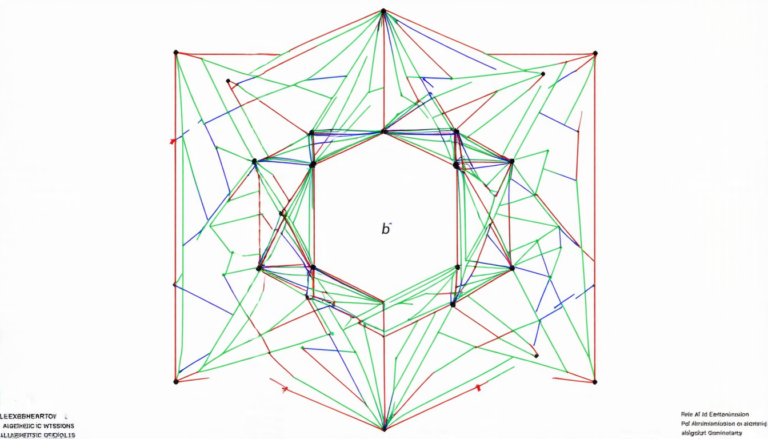

A sumscale problem arises when an objective function depends on a set of parameters that are constrained by a scaling condition. In other words, the parameters need to add up to a certain value or fall within a specific range. This type of problem is particularly challenging because it requires finding the optimal solution while satisfying these constraints.

Researchers have been working on developing methods to tackle sumscale problems more efficiently. One approach involves using optimization algorithms that can handle scaling conditions directly. For instance, some algorithms can adjust the parameters accordingly, ensuring that they meet the required constraints.

Another strategy is to transform the problem by embedding the scaling condition into the objective function itself. This way, the algorithm doesn’t need to worry about satisfying the constraint separately. This approach has been shown to be effective in certain situations, but it’s not a one-size-fits-all solution.

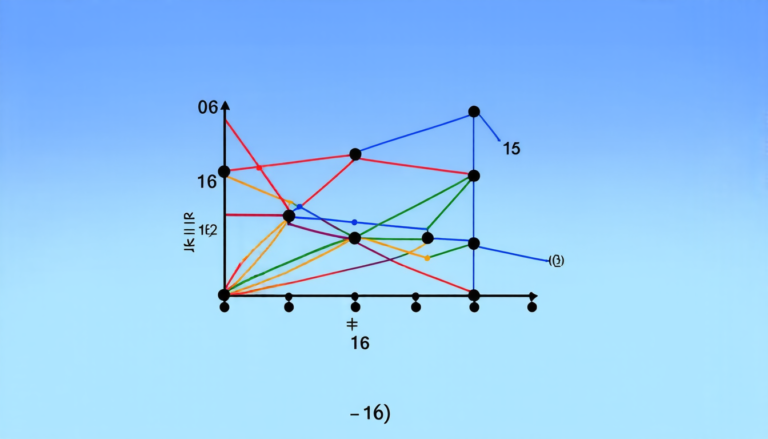

A more sophisticated method involves using projected gradients. In this approach, the algorithm projects the parameters onto a feasible set that satisfies the scaling condition. This ensures that the solution is always valid, even if the optimization process gets stuck in a local minimum.

The researchers also explored the use of specialized software designed specifically for sumscale problems. These programs can often find solutions more quickly and accurately than general-purpose optimization algorithms.

One of the most promising results from this study is the development of a new method that combines the strengths of different approaches. By incorporating scaling conditions into the objective function, while also using projected gradients to ensure feasibility, the algorithm was able to solve sumscale problems with remarkable efficiency.

The implications of this research are far-reaching. In fields such as finance and engineering, where optimization is a critical component, solving sumscale problems more effectively can lead to significant improvements in decision-making and design. Moreover, the development of new methods for tackling these challenges can have a ripple effect throughout various disciplines.

The next step is to further refine and test these methods in real-world applications. By doing so, researchers hope to make optimization more accessible and efficient, allowing us to tackle complex problems with greater ease and accuracy.

Cite this article: “Efficient Optimization of Sumscale Problems”, The Science Archive, 2025.

Optimization, Sumscale, Problem-Solving, Scaling Conditions, Constraints, Parameters, Algorithm, Feasibility, Projected Gradients, Optimization Methods.

Reference: John C. Nash, Ravi Varadhan, “Optimization problems constrained by parameter sums” (2025).