Saturday 01 March 2025

In recent years, researchers have been working on developing scaling laws for floating-point quantization training of large language models (LLMs). These laws aim to provide a better understanding of how different factors influence the performance of LLMs during training, particularly when using lower precision data.

The current state of research in this area has focused primarily on integer quantization, which involves converting model weights and activations to integers. However, floating-point quantization is becoming increasingly important as it allows for more accurate representations of model weights and activations while still reducing the computational complexity.

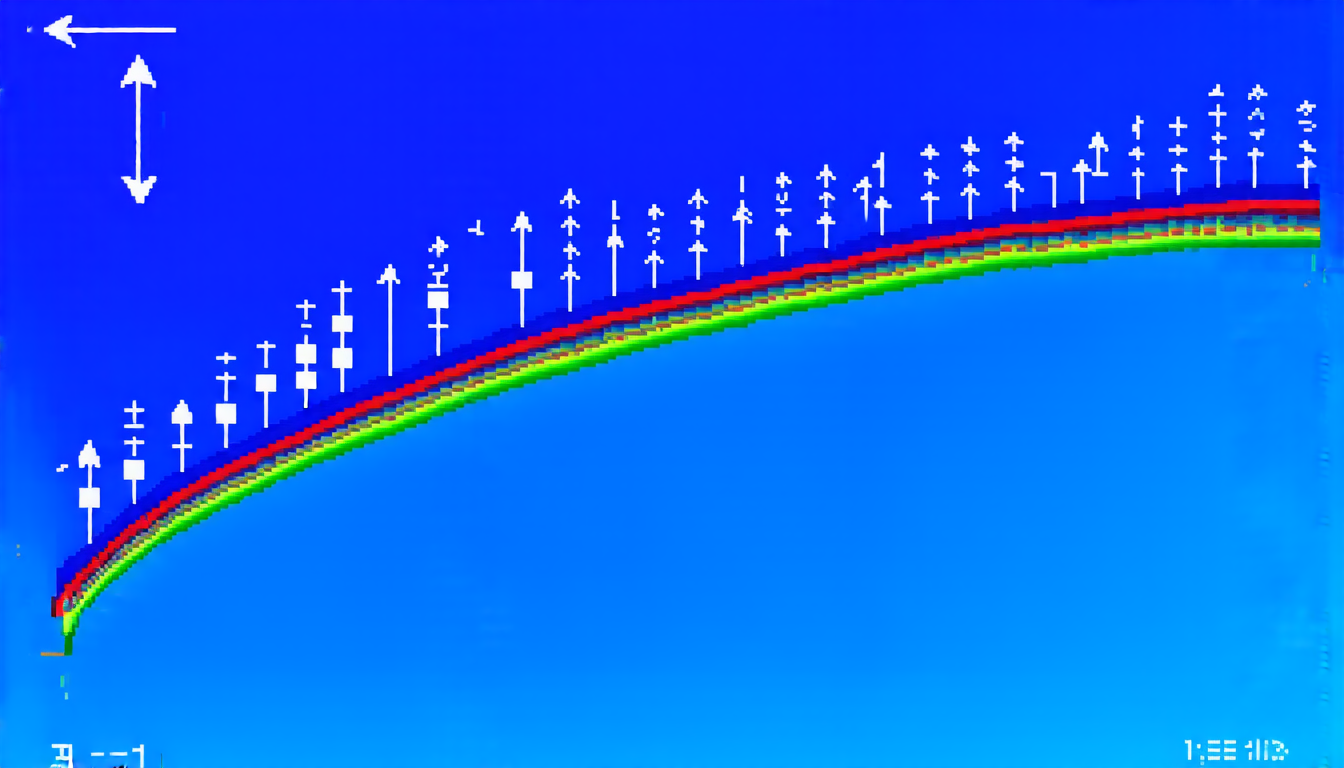

In a recent study, researchers explored the effects of floating-point quantization targets, exponent bits, mantissa bits, and calculation granularity on LLM performance during training. They found that the optimal exponent-mantissa bit ratio depends on the precision required for each model, with higher precision models benefiting from more exponent bits.

The researchers also discovered a critical data size threshold beyond which increasing the amount of training data can actually degrade LLM performance. This finding highlights the importance of balancing the quantity and quality of training data when using lower precision models.

Additionally, the study revealed that the optimal floating-point quantization precision is directly proportional to the computational power available for training. However, within a certain range of computational power, the best cost-performance trade-off is achieved at lower precisions between 4-8 bits.

These findings have significant implications for the development and deployment of LLMs in various applications. By understanding the optimal quantization settings for different models and hardware configurations, developers can create more efficient and accurate language models that require fewer computational resources.

The study’s results also emphasize the importance of carefully selecting the training data and precision level to avoid degradation of model performance. This is particularly crucial when working with limited computational resources or large datasets that may exceed the critical data size threshold.

Furthermore, the researchers’ work paves the way for future studies on floating-point quantization and its applications in LLMs. The development of more accurate and efficient language models will have a significant impact on various fields such as natural language processing, text generation, and machine translation.

Overall, this study provides valuable insights into the effects of floating-point quantization on LLM performance during training. By understanding these factors, researchers and developers can create more effective and efficient language models that can be applied in a wide range of applications.

Cite this article: “Optimizing Floating-Point Quantization for Large Language Models”, The Science Archive, 2025.

Large Language Models, Floating-Point Quantization, Training Performance, Precision, Computational Power, Integer Quantization, Model Weights, Activations, Data Size Threshold, Cost-Performance Trade-Off