Sunday 23 February 2025

For decades, mathematicians and computer scientists have been trying to understand the limits of a fundamental algorithm called gradient descent. This method is used in many areas, such as machine learning, optimization, and data analysis. It’s a simple yet powerful tool that helps computers find the best solution for complex problems.

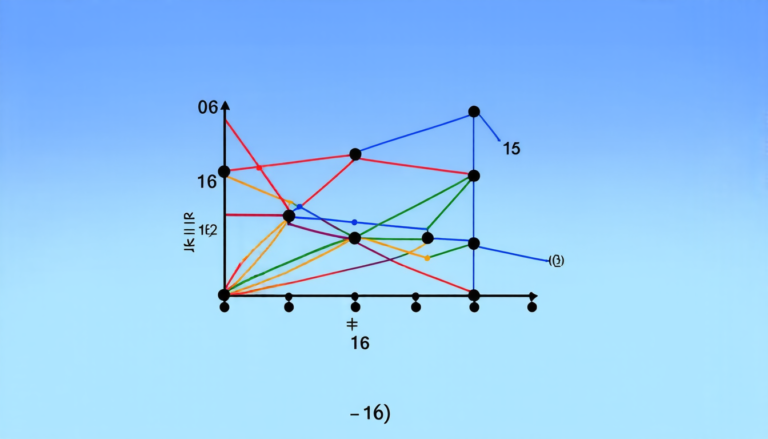

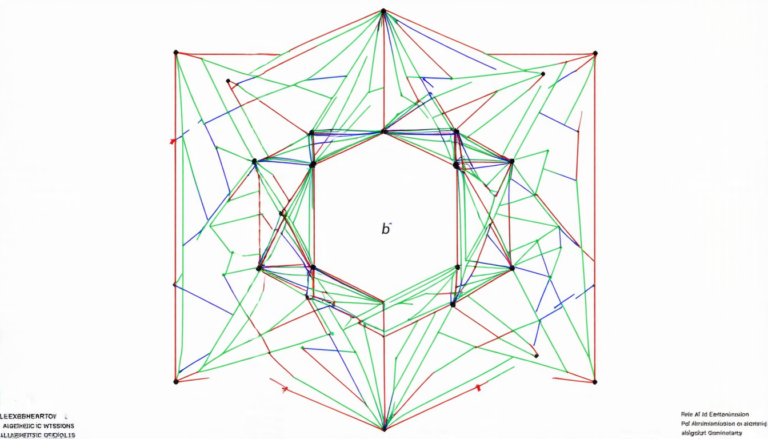

The algorithm works by iteratively updating an estimate of the optimal solution based on the direction of the steepest descent. However, until recently, researchers were unable to determine the exact rate at which gradient descent converges to the optimal solution. This was a major limitation, as it made it difficult to predict how well the algorithm would perform in practice.

Recently, a team of mathematicians and computer scientists made a significant breakthrough in understanding the limits of gradient descent. They developed a new method that allows them to exactly compute the convergence rate of the algorithm for any smooth and strongly convex function.

To understand what this means, let’s consider a simple example. Suppose you’re trying to find the shortest route between two cities using a car GPS. The GPS system would use an optimization algorithm like gradient descent to find the best route based on factors such as traffic patterns and road conditions.

The convergence rate of gradient descent determines how quickly the GPS can find the optimal route. If the convergence rate is fast, the GPS will be able to provide accurate directions in a short amount of time. However, if the convergence rate is slow, the GPS may take longer to find the best route, which could lead to frustration for the driver.

The new method developed by the researchers allows them to exactly compute the convergence rate of gradient descent for any smooth and strongly convex function. This means that they can predict how well the algorithm will perform in practice, which is a major advantage over previous methods.

The implications of this breakthrough are far-reaching. For example, it could be used to improve the performance of machine learning algorithms, which rely heavily on gradient descent. It could also be used to develop more efficient optimization techniques for complex problems.

In addition, the new method has important theoretical implications. It provides a deeper understanding of the fundamental limits of gradient descent and how it interacts with different types of functions. This knowledge can be used to develop new algorithms that are more efficient and effective than existing methods.

Overall, the recent breakthrough in understanding the limits of gradient descent is an exciting development that could have significant impacts on many areas of science and engineering.

Cite this article: “Unlocking the Secrets of Gradient Descent: A Breakthrough in Optimization Algorithms”, The Science Archive, 2025.

Machine Learning, Optimization, Data Analysis, Gradient Descent, Convergence Rate, Mathematical Algorithm, Computer Science, Convex Function, Optimization Technique, Algorithm Performance.