Saturday 01 March 2025

As researchers continue to push the boundaries of what’s possible with quantum computing, a new paper has shed light on some of the biggest hurdles holding back progress in this field. Quantum machine learning, which aims to harness the power of quantum computers for tasks like image recognition and natural language processing, has been plagued by issues with data loading and training.

The problem is that classical computers can handle massive datasets, but quantum computers struggle to process them efficiently. This means researchers have had to get creative when trying to cram large amounts of data onto these machines. In the past, this has often meant using clever tricks like compressing data or relying on specialized algorithms. But what if there was a way to make it all easier?

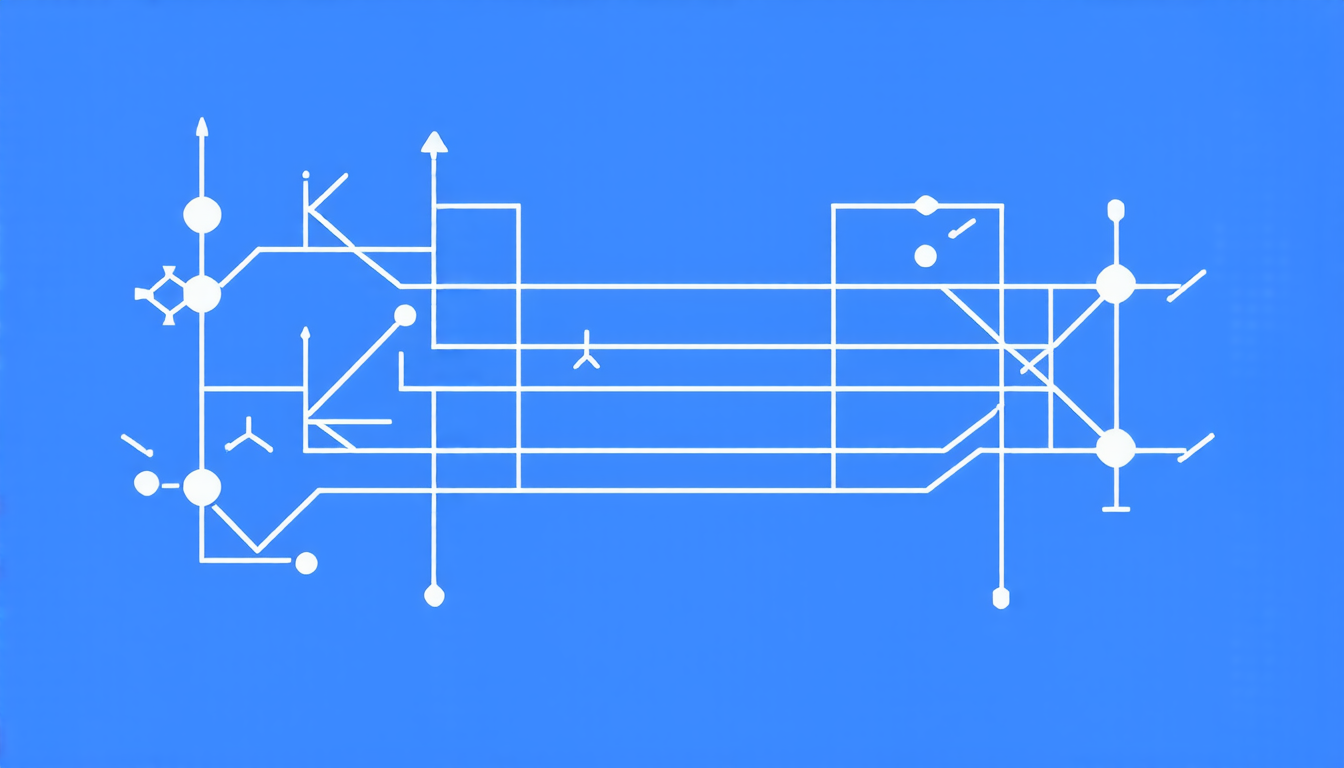

Enter bit-encoding, a new technique that allows quantum computers to load and process larger datasets with greater ease. By encoding binary data – think 1s and 0s – into quantum states, researchers can transfer massive amounts of information onto the machine in a single operation. This not only reduces the amount of time it takes to get started but also opens up new possibilities for what kind of tasks these machines can tackle.

But that’s not all. The same researchers have also developed an innovative training technique called exact coordinate updates, which ensures that quantum models converge to local minima – a crucial step in machine learning. This approach, unlike traditional gradient-based methods, guarantees convergence and eliminates the risk of getting stuck in barren plateaus, those frustrating regions where progress stalls.

The team has also tackled the issue of initializing large models from scratch, using what they call sub-net initialization. By training smaller models first and then using those results to seed larger ones, researchers can sidestep the problem of having to start from scratch every time.

These innovations have significant implications for quantum machine learning as a whole. With bit-encoding, researchers can tackle bigger datasets, which means they’ll be able to develop more accurate and robust models. The exact coordinate updates technique ensures that these models will converge reliably, reducing the risk of errors or stagnation. And sub-net initialization makes it possible to train larger models without having to start from scratch each time.

The potential applications are vast – from medical research to finance, from natural language processing to computer vision. Quantum machine learning has long held promise as a way to tackle complex problems that have stumped classical computers for years. Now, with these innovations in place, researchers are one step closer to realizing that potential.

Cite this article: “New Techniques Unlock Quantum Machine Learnings Potential”, The Science Archive, 2025.

Quantum Computing, Machine Learning, Data Loading, Training, Quantum States, Binary Data, Exact Coordinate Updates, Sub-Net Initialization, Barren Plateaus, Convergence.