Saturday 01 March 2025

The quest for better image recognition algorithms has led researchers down a winding path, filled with twists and turns of convolutional neural networks (CNNs) and contrastive learning. In recent years, the focus has shifted towards medical imaging, where the stakes are higher and the challenges more complex.

One such challenge is the problem of dense data distributions in medical images. Unlike natural images, which can vary greatly in terms of color, texture, and composition, medical images tend to be more uniform and structured. This can make it difficult for CNNs to learn meaningful features, leading to suboptimal performance when applied to real-world tasks.

A team of researchers has set out to tackle this problem by proposing a simple yet effective solution: reducing the scale of data augmentation in contrastive learning. In essence, they’re saying that by scaling back the intensity of image transformations, CNNs can better learn to distinguish between similar and dissimilar images.

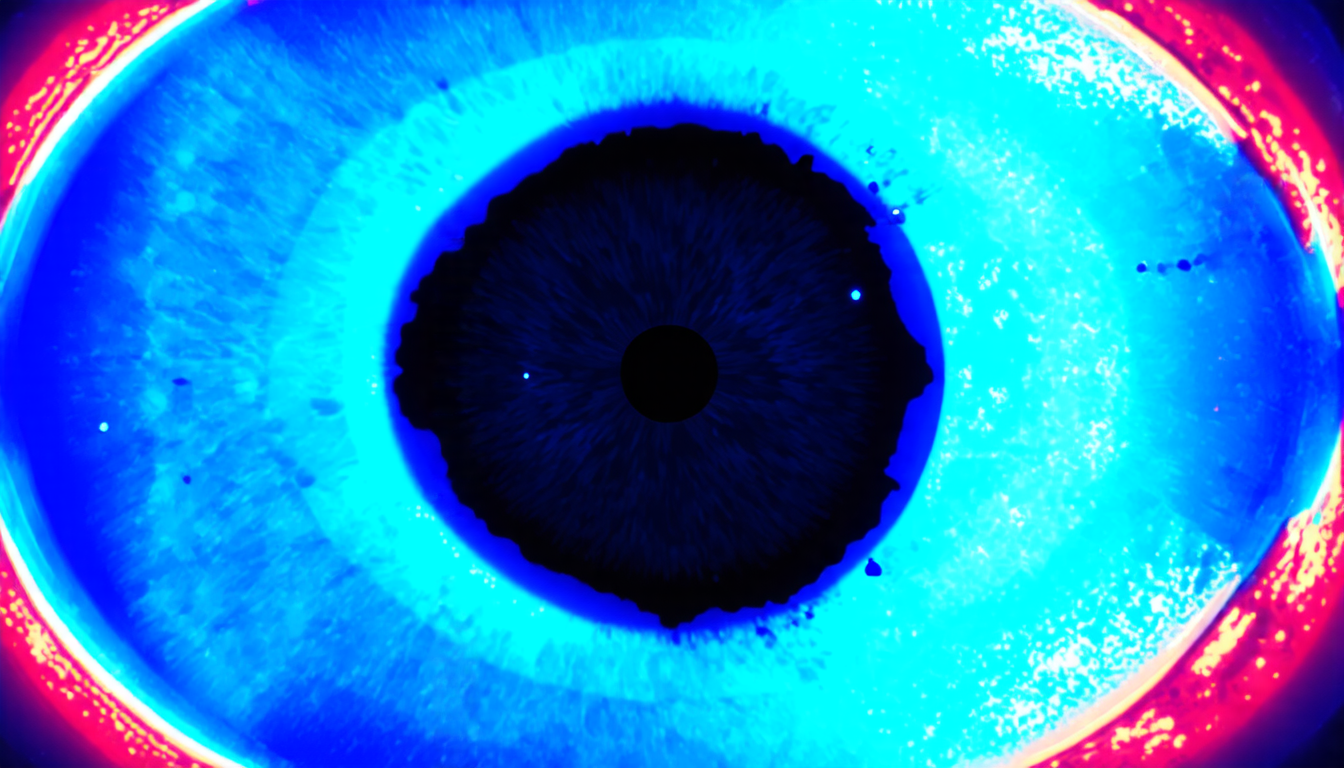

To test their hypothesis, the team used the DINO model, a popular contrastive learning strategy, and pre-trained it on a large dataset of retinal images. They then fine-tuned the model on various downstream tasks, including disease diagnosis, and compared its performance to that of models pre-trained with stronger augmentation strategies.

The results were striking: the model pre-trained with weaker augmentation scales outperformed its stronger counterparts in most cases. On the MESSIDOR-2 dataset, for example, it achieved an Area Under the Receiver Operating Characteristic Curve (AUROC) of 0.848, compared to 0.838 for the strong augmentation strategy.

So why does this approach work? The researchers suggest that by reducing the scale of data augmentation, CNNs can better learn to cluster similar images together and distinguish between them. This is because the denser distribution of medical images makes it more difficult for CNNs to learn meaningful features when using stronger augmentation strategies.

The implications of these findings are significant. Medical imaging is a critical field that relies heavily on accurate image recognition algorithms, particularly in tasks such as disease diagnosis. By developing models that can better learn from dense data distributions, researchers hope to improve the accuracy and reliability of these algorithms, ultimately leading to better patient outcomes.

It’s worth noting that this approach is not without its limitations. For example, it may not generalize well to other medical imaging modalities or tasks. However, as a starting point for further research, it offers a promising direction for the development of more accurate image recognition algorithms in medical imaging.

Cite this article: “Scaling Back Data Augmentation for Improved Medical Image Recognition”, The Science Archive, 2025.

Medical Imaging, Convolutional Neural Networks, Contrastive Learning, Data Augmentation, Image Recognition, Disease Diagnosis, Retinal Images, Dino Model, Auroc, Messidor-2 Dataset