Thursday 23 January 2025

The latest generation of NVIDIA GPUs has brought about significant improvements in performance and power efficiency, but understanding how they work and optimizing for them remains a complex task. In a recent study, researchers delved into the inner workings of the Hopper architecture, shedding light on its memory hierarchy, tensor core performance, and dynamic programming instructions.

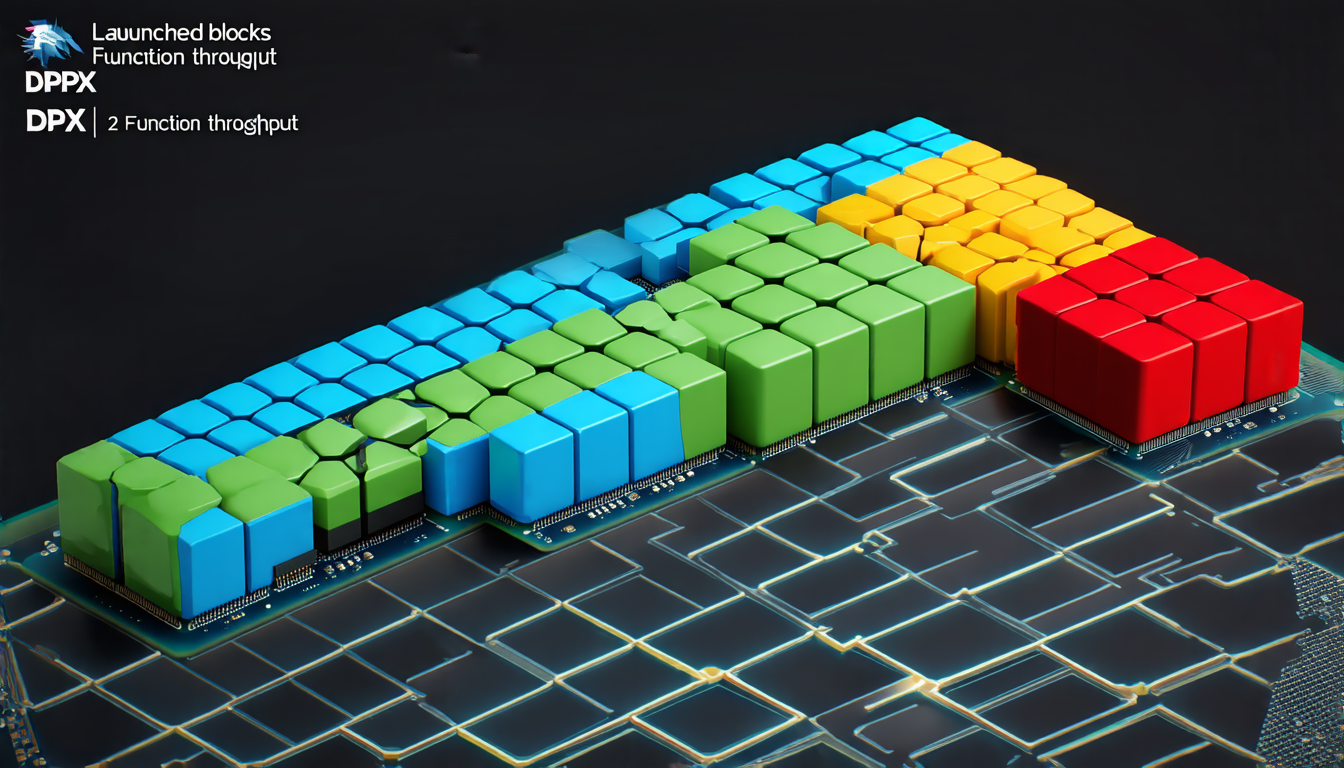

One of the key findings is that the L2 partitioned cache plays a crucial role in program performance, particularly when it comes to distributed shared memory access patterns. The researchers discovered that varying the number of launched blocks can significantly impact the throughput of DPX functions, which are used for dynamic programming.

Another important aspect of the Hopper architecture is its support for half-precision floating-point data types. The study found that using lower-precision data types can offer significant performance advantages, especially in applications where memory bandwidth is a limiting factor. However, this approach may not be suitable for all scenarios, and careful consideration must be given to the specific requirements of each application.

The researchers also explored the impact of different precisions on AI performance across various architectures. Their experiments revealed that lower-precision data types can lead to faster execution times and reduced memory usage, but may compromise accuracy in certain situations.

In addition, the study analyzed the performance of DPX instructions, which are used for dynamic programming. The results showed that not all DPX instructions offer significant performance advantages on the Hopper architecture, and careful consideration must be given to the specific instructions being used.

The researchers’ findings have important implications for developers working with NVIDIA GPUs. By understanding how the memory hierarchy and tensor core performance work, as well as the limitations of DPX instructions, they can optimize their code to take full advantage of the latest GPU architectures. This is particularly important in applications such as deep learning, where efficient execution times and reduced memory usage are critical for large-scale models.

The study also highlights the need for more research into optimizing GPU performance for dynamic programming and other irregular applications. As GPUs continue to play an increasingly important role in a wide range of fields, including artificial intelligence, machine learning, and data analytics, it is essential that developers have access to tools and techniques that allow them to effectively utilize these powerful processors.

Overall, the researchers’ work provides valuable insights into the inner workings of the Hopper architecture and its potential for optimizing GPU performance.

Cite this article: “Unlocking the Potential of NVIDIAs Hopper Architecture”, The Science Archive, 2025.

Nvidia, Gpus, Hopper Architecture, Memory Hierarchy, Tensor Core, Dynamic Programming, Dpx Instructions, Half-Precision Floating-Point, Ai Performance, Gpu Optimization