Thursday 23 January 2025

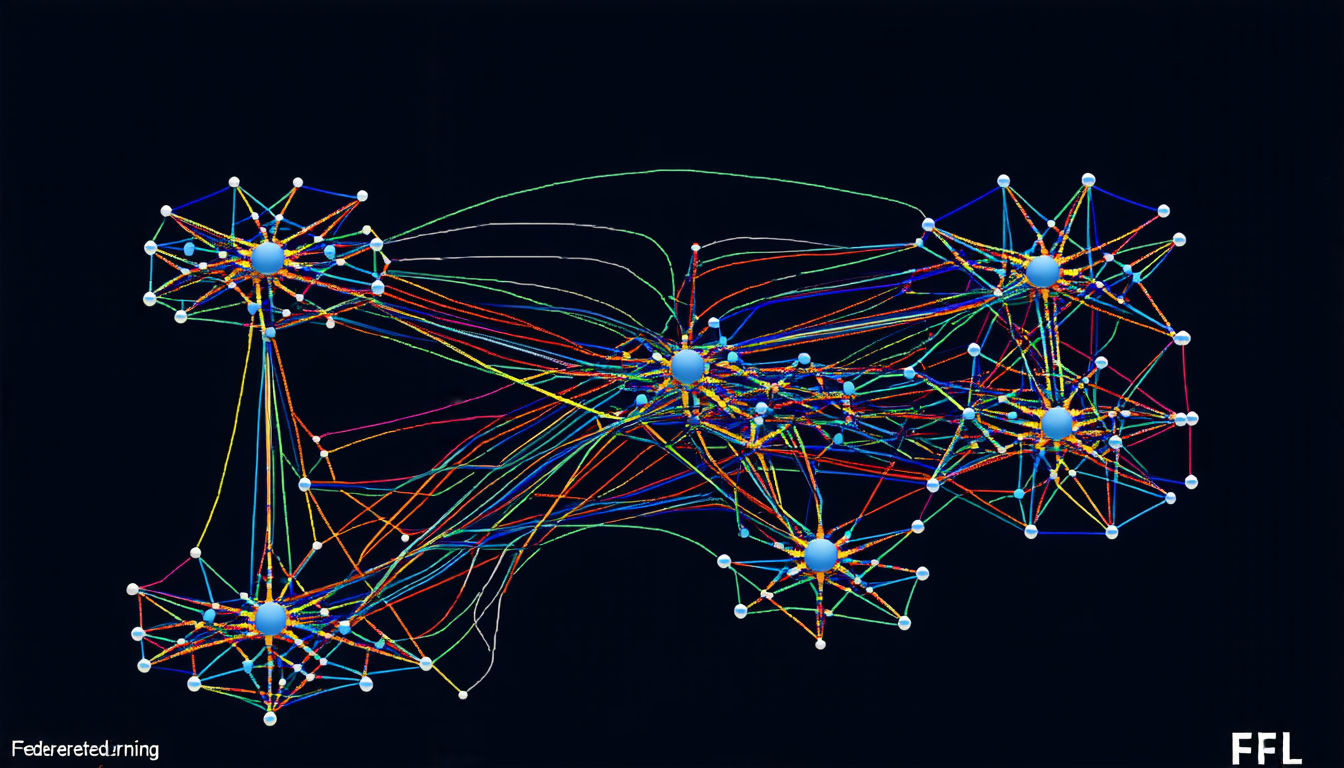

As researchers continue to explore the vast potential of Federated Learning (FL), a crucial issue has emerged: how to ensure that each participant contributes fairly and benefits equally from collaborative training. The problem is particularly challenging when dealing with imbalanced data, where some participants hold significantly more data than others.

A team of researchers has proposed an innovative solution called CYCle (Collaborative Yielding for Collective learning), which aims to overcome the limitations of existing FL algorithms. By leveraging a novel reputation system and adaptive gradient alignment, CYCle encourages cooperation among participants while minimizing the negative impact of imbalanced data.

In their study, the team tested CYCle on two popular image classification datasets: CIFAR-10 and CIFAR-100. They compared its performance with several state-of-the-art FL algorithms, including FedAvg, VPDL, and CFFL. The results were striking: CYCle consistently outperformed these competitors in terms of collaboration gain (MCG) and fairness, while maintaining competitive accuracy.

One key innovation behind CYCle is its reputation system, which assigns a score to each participant based on their contribution to the collective learning process. This score is then used to adjust the gradient alignment between participants, promoting cooperation and discouraging free riding. The team demonstrated that this approach effectively mitigates the negative impact of imbalanced data, ensuring that all participants benefit from collaboration.

The researchers also explored the effects of label flipping, a common issue in FL where some participants deliberately manipulate their labels to gain an advantage. They found that CYCle’s reputation system and adaptive gradient alignment enable it to adapt to such scenarios, maintaining fairness and cooperation even when faced with malicious behavior.

A sensitivity analysis revealed that CYCle’s performance is robust to variations in its hyperparameters, providing a level of flexibility and reliability essential for real-world applications. The team’s findings suggest that CYCle can be successfully applied to a wide range of FL scenarios, including those involving imbalanced data and label flipping.

The implications of this research are significant: it provides a powerful tool for ensuring fairness and cooperation in FL, enabling participants to work together effectively even when faced with challenging data distribution issues. As the use of FL continues to grow, CYCle’s innovative approach will play a crucial role in unlocking its full potential, fostering collaboration and advancing AI research as a whole.

Cite this article: “Federated Learning with Fairness: Introducing CYCle”, The Science Archive, 2025.

Federated Learning, Collaborative Learning, Imbalanced Data, Reputation System, Gradient Alignment, Fairness, Label Flipping, Sensitivity Analysis, Hyperparameters, Ai Research